Google Releases Gemma 4 Under Apache 2.0 License: Most Powerful Open-Source AI Models With 400 Million Downloads

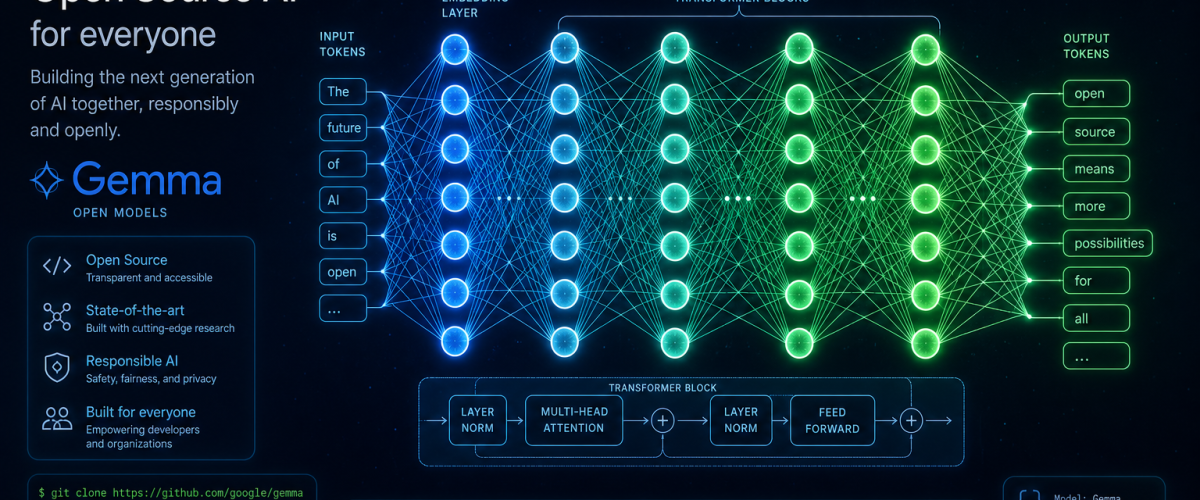

Google has released Gemma 4, its most intelligent and capable open-source AI model family to date, under the industry-standard Apache 2.0 licence. The release, announced on 02 April 2026, makes Gemma 4 the first model in the Gemmaverse to carry an OSI-approved open-source licence, providing developers with unprecedented freedom to modify, deploy, and commercialise AI applications built on Google’s latest technology.

Built on Gemini 3 Technology

Gemma 4 is built from the same research and technology that powers Google’s proprietary Gemini 3 model, making it the most capable model family available for local deployment on personal hardware. The models are purpose-built for advanced reasoning and agentic workflows, delivering what Google describes as “an unprecedented level of intelligence-per-parameter.”

The release comes in four versatile sizes: Effective 2B (E2B), Effective 4B (E4B), 26B Mixture of Experts (MoE), and 31B Dense. This range ensures that Gemma 4 can run on everything from mobile devices and edge hardware to powerful workstations and cloud infrastructure, giving developers flexibility to choose the right model for their specific use case and computational budget.

The Mixture of Experts architecture used in the 26B model is particularly significant. MoE models activate only a subset of their parameters for any given input, delivering the performance of a much larger model while consuming significantly less computational resources. This approach has become a defining trend in modern AI development, enabling more efficient inference without sacrificing capability.

Apache 2.0: A Watershed Moment for Open AI

The decision to release Gemma 4 under the Apache 2.0 licence marks a significant shift in Google’s approach to open-source AI. Previous Gemma releases used custom licences that, while permissive, came with restrictions that limited commercial use in certain scenarios. The Apache 2.0 licence removes these constraints, providing developers with well-understood legal terms for modification, reuse, and further development.

Google framed the licensing decision around three principles: autonomy, allowing developers to build and modify models as they see fit; control, enabling local and private execution that does not rely on cloud infrastructure; and clarity, providing standard licence terms that eliminate legal ambiguity.

The move puts Gemma 4 on equal footing with other prominent open-source AI projects and could accelerate the model’s adoption among enterprises that require clear intellectual property terms before integrating AI into production systems. The growing use of AI across industries, from fintech to healthcare, makes licensing clarity increasingly important for responsible deployment.

400 Million Downloads and the Gemmaverse

Since the launch of the first Gemma generation, developers have downloaded Gemma models over 400 million times, building a vibrant community of over 100,000 model variants. This ecosystem, known as the Gemmaverse, includes fine-tuned models for specific tasks, multilingual adaptations, domain-specific variants for healthcare and legal applications, and creative implementations that push the boundaries of what open models can achieve.

The scale of community engagement is remarkable by any standard. The 100,000 variant milestone means that for every official Gemma model released, the community has created roughly 25,000 custom versions. This ratio speaks to the demand for high-quality open models that can be adapted to specific requirements without the constraints of proprietary API access.

Benchmarks and Performance

While Google has not published exhaustive benchmark comparisons, initial testing by independent researchers and AI benchmark platforms indicates that Gemma 4 models outperform competing open-source models of similar size on most standard evaluations. The 31B Dense model, in particular, is reported to approach the performance of much larger models on tasks involving complex reasoning, code generation, and multi-step problem solving.

The agentic workflow capabilities of Gemma 4 are a key differentiator. As AI applications increasingly move from simple question-answering to autonomous task execution, the ability to plan, reason across multiple steps, use tools, and recover from errors becomes critical. Gemma 4’s architecture has been specifically optimised for these agentic patterns, making it suitable for applications like automated research, code review, customer service workflows, and data analysis pipelines.

This capability complements Google’s proprietary offerings. As the recent launch of Gemini 3.1 Flash-Lite demonstrated, Google is pursuing a dual strategy of offering both proprietary API-based models for enterprise clients and open-source models for developers who need local control and customisation freedom.

Competition in the Open-Source AI Space

The release of Gemma 4 intensifies competition in the open-source AI space, where Meta’s Llama, Mistral’s models, and various Chinese AI labs are all vying for developer mindshare. The race to produce the best open model has accelerated significantly in 2026, with each new release pushing the boundaries of what is possible without proprietary infrastructure.

For enterprises, this competition is overwhelmingly positive. The availability of multiple high-quality open models reduces vendor lock-in, drives down costs, and gives organisations genuine choice in selecting the AI foundation for their applications. The entry of AI services companies backed by major tech firms has further expanded the options available to businesses seeking to deploy AI at scale.

What Developers Should Know

Developers looking to get started with Gemma 4 can access the models through Google’s official repositories, Hugging Face, and the Google AI Studio. The E2B and E4B models can run on consumer-grade hardware, making them accessible to individual developers and small teams. The larger 26B MoE and 31B Dense models require more substantial hardware but remain well within the reach of university research labs and mid-size companies.

Google has also published comprehensive documentation, including fine-tuning guides, deployment best practices, and safety evaluation protocols. The company recommends that developers conduct their own safety testing for any application-specific deployments, particularly in high-stakes domains like healthcare, finance, and legal services.

The Apache 2.0 licence means there are no restrictions on commercial use, no requirement to share modifications back to Google, and no limitations on the type of applications that can be built. This openness, combined with the model’s technical capabilities, positions Gemma 4 as a strong contender for the default open-source AI model of 2026.

Explore more on artificial intelligence at AI and Tech on Daily Tips.

- Google Releases Gemma 4 Under Apache 2.0 License: Most Powerful Open-Source AI Models With 400 Million Downloads - May 8, 2026

- ISRO Announces 27 Space Missions for 2026-27 Including First Uncrewed Gaganyaan Flight in Ambitious Annual Plan - May 8, 2026

- Artemis 2 Moon Mission: NASA Astronauts Complete First Crewed Lunar Flyby in Over 50 Years and Return Safely to Earth - May 7, 2026