Google Launches Gemini 3.1 Flash-Lite AI Model on 07 May 2026: What It Means for Developers and Businesses

Google released the generally available version of Gemini 3.1 Flash-Lite on 07 May 2026, marking a significant milestone in the company’s strategy to make advanced AI capabilities accessible to cost-conscious developers and businesses. The model, which Google describes as its “most cost-efficient Gemini model” to date, is optimised for low-latency, high-volume workloads and aims to match the performance of the larger Gemini 2.5 Flash across key capability areas — at a fraction of the cost.

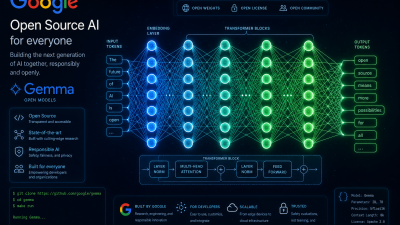

The release comes at a time when the AI industry is increasingly focused on efficiency and accessibility, with companies racing to deliver powerful models that can run affordably at scale. Google had already launched its Gemma 4 AI models in April 2026, but Gemini 3.1 Flash-Lite represents a different approach — targeting enterprise and developer use cases where speed and cost matter as much as raw capability.

Key Features of Gemini 3.1 Flash-Lite

The model introduces several improvements over its predecessor, Gemini 2.5 Flash-Lite, and brings capabilities that were previously available only in larger, more expensive models:

- Improved response quality: Google states that the model aims to match Gemini 2.5 Flash performance across benchmarks, a significant upgrade for a model positioned at the “Lite” tier.

- Better instruction following: Targeted improvements make it more reliable for complex chatbot workflows and scenarios requiring strict adherence to detailed instructions.

- Enhanced audio input: Improved quality for tasks like automated speech recognition (ASR), expanding the model’s usefulness in voice-based applications.

- Expanded thinking support: Users can now control how much reasoning the model performs by choosing from minimal, low, medium, or high thinking levels — allowing a trade-off between response quality and speed.

The model accepts multimodal inputs including text, code, images, audio, video, and PDFs, with a maximum context window of 1,048,576 tokens (approximately one million tokens) — the same as Google’s larger models. Output is capped at 65,535 tokens by default.

Why Flash-Lite Matters for the AI Industry

The AI industry in 2026 is defined by a central tension: the demand for increasingly capable models is growing rapidly, but so are the costs of running them at scale. For many businesses, the per-token pricing of frontier models like GPT-5 or Gemini 3.0 Ultra makes them impractical for high-volume applications such as customer service chatbots, real-time content moderation, or large-scale data processing.

Flash-Lite models address this gap by offering a significantly lower cost per token while maintaining enough quality to handle the vast majority of business use cases. Google’s pricing strategy for Gemini 3.1 Flash-Lite has not been publicly disclosed in full, but the company has indicated it will be substantially cheaper than the standard Gemini 3.1 Flash model.

The timing is notable. Just two days ago, Anthropic and OpenAI launched AI services companies backed by Wall Street giants, signalling that the race to commercialise AI is intensifying. Google’s release of a more efficient model is both a competitive response and a recognition that the next phase of AI adoption will be driven by cost optimisation, not just capability benchmarks.

Technical Architecture and Capabilities

Gemini 3.1 Flash-Lite supports a comprehensive set of features that make it suitable for production environments. These include grounding with Google Search, code execution, system instructions, function calling, structured output, and context caching — both implicit and explicit. It also integrates with Google’s Vertex AI RAG (Retrieval Augmented Generation) Engine for applications that need to combine the model’s knowledge with external data sources.

The expanded thinking feature is particularly noteworthy. Previous Lite-tier models were optimised purely for speed, often at the expense of reasoning quality. By allowing developers to dial up the “thinking level” from minimal to high, Google is giving users the flexibility to use the same model for both simple tasks (where speed matters most) and more complex reasoning tasks (where accuracy is paramount).

Impact on India’s Tech Ecosystem

India is one of the world’s largest and fastest-growing markets for AI adoption. Indian startups and enterprises have been among the earliest adopters of cost-efficient AI models, using them to power everything from vernacular language chatbots to agricultural advisory systems and financial fraud detection.

The release of Gemini 3.1 Flash-Lite is expected to accelerate several trends in India’s tech sector. Startups building AI-powered products can now offer more sophisticated capabilities without proportionally increasing their infrastructure costs. Enterprise customers in sectors like banking, insurance, and e-commerce can deploy AI at scale for tasks that were previously too expensive to automate.

The model’s improved audio input capabilities are particularly relevant for India, where voice-based interactions are a primary interface for millions of users, especially in regional languages. Better ASR performance could unlock new applications in healthcare (voice-based symptom checking), education (spoken language tutoring), and government services (voice-based citizen helplines).

Competition Heats Up

Google’s release puts pressure on its competitors. OpenAI’s GPT-4o-mini, Anthropic’s Claude 3.5 Haiku, and Meta’s Llama models all compete in the cost-efficient AI tier. The broader trend of AI replacing human teams — as illustrated by Coinbase’s recent decision to lay off 14 percent of its workforce — means demand for affordable AI models is only going to increase.

For developers and businesses evaluating their options, the key question is whether Google’s claim of matching Gemini 2.5 Flash performance holds up in real-world testing. Early reports from developers using the preview version have been positive, with many noting that the model handles complex instruction-following tasks better than its predecessors.

Deprecation Timeline

Google has announced that the preview version of Gemini 3.1 Flash-Lite (gemini-3.1-flash-lite-preview) will be deprecated on 11 May 2026 and shut down on 25 May 2026. Developers currently using the preview are advised to migrate to the GA version immediately. This tight deprecation window reflects Google’s confidence in the GA release and its desire to consolidate its model offerings under stable, production-ready versions.

As the AI landscape continues to evolve at breakneck speed, the launch of Gemini 3.1 Flash-Lite is a reminder that the race is no longer just about building the most powerful model — it is about building the most useful one at a price the world can afford. For India’s technology community and the global developer ecosystem, that is arguably the more important competition.

- Google Releases Gemma 4 Under Apache 2.0 License: Most Powerful Open-Source AI Models With 400 Million Downloads - May 8, 2026

- ISRO Announces 27 Space Missions for 2026-27 Including First Uncrewed Gaganyaan Flight in Ambitious Annual Plan - May 8, 2026

- Artemis 2 Moon Mission: NASA Astronauts Complete First Crewed Lunar Flyby in Over 50 Years and Return Safely to Earth - May 7, 2026